The Many Benefits of a Distributed, Multi-Location Data Center Setup

Knowledge blog

As a CIO, CTO, or other IT executive, did you ever think about hosting your IT infrastructure, including your cloud environment in multiple geographically dispersed data centers? There are many benefits to organizing your data center environment in this manner. In this article we will take a closer look at the potential of a multi-data center architecture and the various benefits it can bring to your organization.

Why a Multi-Location Data Center Setup is an essential part of your IT strategy

Undoubtedly, few IT executives from small and medium-sized businesses have ever thought about an infrastructure design with numerous data centers dispersed across different geographic regions, potentially across national boundaries. A setup like this used to be something exclusively within reach of Chief Information Officers and Chief Technology Officers of the larger organizations, or at least organizations with an absolute need to establish a setup this way. For some obvious reasons. Up until recently, maintaining IT infrastructure across several data centers used to be quite expensive. Additionally, for many organizations there wasn’t really a pressing need to set up a multi-data center configuration. But times have evolved.

Enterprise organizations with a significant IT budget including a sizable IT department and maybe numerous IT teams in different countries, will probably be accustomed to a data center design for their IT infrastructure in which multiple dispersed facilities play a role. Just as, for example, hospital organizations for which maximum redundancy thus business continuity and healthcare industry compliance are of vital importance.

With current technological advancements and changing user demands from the business perspective, a multi-data center design is becoming increasingly interesting to consider though. In addition to maximizing IT infrastructure resilience, other goals can include filling in infrastructure presence ‘at the edge’ and making optimal use of (distributed) cloud infrastructure, to name a few. A multi-data center setup is now feasible for any organization who, up until recently, did not perceive the need for it or for whom the cost was maybe too high.

Creating Ultimate Resilience

To avoid data loss while ensuring business continuity, bringing resilience to an IT infrastructure is vital, especially when it comes to business-critical applications. Cloud is inherently very resilient, but the use of cloud may not be appropriate for every business and in every situation or for every application. As a CIO, CTO, or IT manager, many of you will possibly end up with a hybrid IT environment, in which public or private cloud may play a role, but in which an own data center environment, whether by means of colocation or not, may also have its place.

Enterprises, financials, hospitals, and other organizations with business-critical applications have traditionally been used to utilizing multiple data centers to house their IT infrastructures. On the one hand, this may be due to having multiple offices in different locations. Another argument is that these organizations want to be on the safe side, whether or not forced by industry regulations, while also data privacy and compliance demands may play a role. Most data centers have equipped components such as cooling and power supply quite redundantly, which helps improve the resilience of the data center environment and thus the IT infrastructure as a whole. However, as an administrator of business-critical applications, you must take into account all types of calamities, even if these are exceptional situations and there is a low probability, in percentage terms, that the calamity in question will actually occur.

Applying ultimate redundancy at the data center level can be a must for enterprises, banks, hospitals, and other organizations with business-critical applications. Even if your data center provider has implemented the highest redundancy levels meeting ultimate uptime requirements. Instead of using just one data center, one of the highest levels of redundancy can be achieved by using a twin data center setup with synchronous replication. Direct data synchronization between two IT environments that are totally similar and located geographically apart from each other (perhaps at a certain minimum distance to meet risk mitigation needs, while limiting the distance to meet potential latency requirements). By choosing a data center setup like this, the theoretical danger of, say, a fire, a power outage, or for example network issues brought on by excavation work is spread out. This concept can even be extended to multiple data centers.

When opting for a twin data center design with a physically distinct infrastructure connecting the two data centers point-to-point, or a setup with even more data centers involved, users can never lose any crucial data and they should be unaware of an outage in the event that one of the data centers would accidentally experience an interruption. The burden of an outage is then seamlessly transferred to the other data center.

Worldstream’s company-owned data centers in The Netherlands feature the highest redundancy levels, with all enterprise-grade certifications and controls in place, while our data center environment internationally via partner maincubes in Frankfurt, Germany, for example, also features certified military-grade security and redundancy. Even then, a multi data center setup might be desirable for those with ultimate availability and risk mitigation needs. For these and other reasons, Worldstream has developed its Multi-Location data center solution, also allowing for real-time movement of workloads. Aided by its software-defined capabilities, the SMB price point ensures that this enterprise-grade functionality is within reach of a large group of users. It’s available through the Worldstream Elastic Network (WEN), our software-defined network powered by Worldstream’s physical global network backbone.

Edge Data Center Deployment

Another argument for choosing a multi-location data center design may have to do, for example, with the global trend around edge computing, where applications need to run in a distributed manner close to its users.

Data center resilience and business continuity are also some of the main advantages of edge computing, but there’s more. Sure, with edge computing, sites at the edge can continue to function independently in the case of an outage – it’s more redundant than a setup in a centralized data center since the infrastructure is local, but that’s just a particle of the benefits it may bring. Across all sectors and use cases, edge computing may help improve user experiences by enabling quicker, more consistent, and more reliable services.

Edge computing through a multi-location data center design allows for decision making in fast paced environments based on real-time data. At the edge is where real-time decision making can excel. If the amount of time needed for decision making and data processing is so small that one cannot afford delay by transmitting the data across the Internet, it can probably best be done at the network edge. Here, decisions can be made decentralized based on a combination of data from multiple local sensors.

Data processing at the edge provides great benefits for example for Internet of Things (IoT) applications, as the processing capabilities are brought as near as feasible to IoT devices. IoT computing tasks at the edge can save time and resources compared to transferring data to be processed at central data centers. Processed data will be quicker available at its target IoT destinations. So, edge computing keeps the processing of IoT data near to the source.

For businesses deploying IoT-based products and services, this might result in significant IT infrastructure advantages in terms of performance, latency, security, and cost.

Another development for which edge computing through a multi-location data center setup can be interesting, is connected to the rapid increase in data production and processing in general – reinforced by the use of AI and ML powered applications. With the increase in data creation, the ‘data pipelines’ between the various Internet Exchanges across the globe may grow too big and too complex. If all the expanding volumes of data will first be sent across the Internet before it’s getting processed, in the coming years, the worldwide web will simply not be equipped to handle all data that has to be moved and processed.

Here, however, we must make a small side note – as Worldstream’s low-latency global network backbone with only 45% utilization has ample bandwidth available, thus ensuring future network growth for our clients. More generally speaking though, it would be wise to move some computing tasks to the edge, in the interest of the Internet infrastructure overall. Future applications will increasingly rely on machine learning (ML) and artificial intelligence (AI), further increasing the burden on Internet traffic and placing even more focus on the need for network speed and capacity as well as data processing at the edge.

Enhancing Security, Latency, Cost Efficiencies

One of the prevalent misconceptions regarding the edge is that cloud computing would somehow be replaced by it. In practice, edge and cloud should cooperate. The intelligent approach presupposes that a decentralized edge and a centralized cloud are in sync. Whether it be public, hybrid, or private cloud, a cloud environment may provide for a platform to centralize all data and use it as and where it is required throughout an organization.

Deploying IT infrastructure at the edge through a multi-location data center setup can be beneficial for an enhanced security architecture as well. Sure, edge computing may increase the potential attack surface for those with malicious intent, but the potential impact on the company in its entirety may significantly be reduced by it. The fact that less data may be intercepted when less data is being transferred over the Internet is another reality that may be beneficial in terms of a company’s security. In addition, edge computing aids organization in resolving challenges with data sovereignty, local compliance, and privacy legislation.

When it comes to network latency, with an autonomous car as an example, the relevance of the data being processed may decrease with processing time. So, transferring and processing data quickly is important since much of the data an autonomous vehicle gathers ‘at the edge’ can be worthless after a few seconds. Particularly on a crowded road, milliseconds count for autonomous driving, enhancing the need for the lowest latencies when it comes to transferring data.

Another example where milliseconds in processing time delay may count, in other words where the lowest network latencies can play a key role is within Industry 4.0 settings. Here, AI-based technologies continuously need to monitor all parts of a production process to maintain data consistency. With Industry 4.0 setups, there is frequently insufficient time to send data back and forth between a manufacturing location on the one side and a centralized data center or clouds on the other. In circumstances such as equipment failures and potentially fatal accidents, instant data analysis might be indispensable. Reaction times are boosted by eliminating latency and pushing data processing to the edge, at the spot where the data is created.

When it comes to the cost of transferring, maintaining, and safeguarding data for edge usage, spending the same amount of money on all data might not seem the smartest choice – as not all data is the same and not all data will hold the same value for an organization. Some data can be vital to business operations, while other data can probably be of less value or even useless. As a company, you might save money by keeping as much data at edge locations as possible rather than having to use expensive bandwidth for your data to travel back and forth to the edge. Again, a multi-location data center design may benefit edge use cases, allowing for cost savings as well.

Voice-over-IP Applications

Other applications for which a multi-location data center design can be interesting concerns latency-sensitive operations such as for media players and, for example, also Voice-over-IP (VoIP). A media player will most probably drop out if there is excessive delay in the procedure of handling a media playing event for users at the edge. The same accounts for VoIP. With latency too high, the quality of conversations between users at edge locations will falter, which can eventually lead to the drop out of telephone calls, something that is utterly unacceptable if you are a provider offering such a service to customers. As a provider, you will probably also want to offer service level agreements (SLAs) with your VoIP services. With a multi-location data center setup, controlling latency values will become easier while increasing the likelihood that VoIP-based phone calls will run flawlessly thus to customer satisfaction.

A provider that has numerous data centers spread out across a geographical region, being a country, a continent, or the world, will be able to offer a more reliable experience. A VoIP based call will be of higher quality and reliability the closer the data centers are to its users.

With VoIP telephony, every call being made generates data packets. The amount of data a data center must manage rises in direct proportion to the number of telephone calls. One VoIP service provider might need to handle VoIP telephone calls for thousands of businesses. This implies that millions of data packets might be compressed, sent, and received globally.

As a VoIP service provider, you want to be certain that your infrastructural setup can accommodate client demand, even when temporary traffic spikes are concerned, or because of necessary scale expansion due to customer growth. The amount of data that a centralized data center can store and handle has its limits though. Performance of a VoIP application might suffer when trying to centralize everything into a single data center. See it as a funnel. If too much data is sent to a centralized data center, conversations by phone may experience jitter and latency, leading to infrastructural inaccuracies and a drop of conversation quality.

With a multi-location data center setup, these issues are not that likely, because the processing of data packets is handled close to its users. As a VoiP provider, you are then assured that the quality of the VoIP phone calls remains at a very high level while you can also arrange for better SLA agreements with your customers.

Network Backbone, EVPN VXLAN Technology

Just in case you will think that deploying and managing multi-location data center infrastructure in multiple geographic locations is going to be very complex and a challenge to achieve, we can reassure you. The key to flawlessly managing IT infrastructure in multi-site data centers is the network. For enterprise organizations, this is usually familiar territory. There, until recently (and still), large sums were invested to set up the network infrastructure adequately before building the remaining IT infrastructure on it. For SMBs, this might be new, but they can skip the cost-intensive investments that were previously required to make a multi-location data center deployment happen.

Worldstream’s R&D and networking team has already figured out the complexities for customers while preventing it from becoming a costly endeavor by integrating EVPN (Ethernet Virtual Private Network) VXLAN (Virtual Extensible LAN) technology into its offerings. EVPN VXLAN is a core technology for WorldStream’s recently developed proprietary network technology, our software-defined network that builds on the global Worldstream network backbone. By doing so, clients can elastically deploy and manage all sorts of data center services infrastructure at edge data center locations, including the multiple data centers, networking infrastructure, and cybersecurity functions.

With our EVPN VXLAN-based technology, a physical connection to an existing data center is still required. So, it is not a fully virtualized infrastructure. It means that our physical global network backbone must have a presence in a data center that you want to include in your multi-location data center infrastructure.

Previously, this required a costly Data Center Interconnect (DCI) architecture, connecting multiple data centers through point-to-point connections. When expanding a multi-location data center infrastructure, this exercise had to be done all over again, which may not only result in significant costs but also in long lead times. An architecture like DCI does not offer a flexible infrastructure, something that our EVPN VXLAN based network technology definitely does.

Adding Distributed Cloud

EVPN VXLAN based network technology not only allows for setting up multi-location datacenter infrastructure. A network design like this enables companies from SMBs up to enterprises to flexibly interconnect all forms of computing, network, and cybersecurity resources in a seamless way. It means that our network architecture is truly able to fulfill the most stringent organizational requirements when it comes to hybrid IT fulfillment, even at the (network) edge. For example, cloud instances, bare metal hardware, virtual machines (VMs), and cybersecurity solutions can be hosted at virtually any data center location of choice, whether company-owned or by using a data center colocation provider. Workloads can then even be transferred and relocated ad hoc thanks to these interconnected resources.

Yes, we are mentioning cloud computing as an option to integrate into a distributed multi-location data center infrastructure like this. So, it’s not just about housing physical servers in physical data centers at multiple locations. A distributed cloud infrastructure housed at edge locations can bring all kinds of benefits, especially if you take into account the increasing use of artificial intelligence (AI) and machine learning (ML) in applications. Distributed cloud can help to flexibly handle data spikes and rapidly growing data volumes that may result from the AI/ML trend.

The EVPN VXLAN-based network from Worldstream provides the highest level of network flexibility, whilst the cloud provides flexibility too in terms of storage and computing capacity including quick and precise data processing at edge locations. These two advantages may be combined relatively easily through the use of Worldstream Elastic Network (WEN). The network with this multi-location data center setup building on it can be used to easily spread the flexibility of cloud computing’s processing and storage capacity.

Worldstream Multi-Location Data Center Offering

Worldstream was founded in 2006 by childhood friends with a shared passion for gaming. Dissatisfied with the high costs and unreliability of game servers, they came up with the idea of offering better solutions. Since then, the Westland-based IT company has grown into an international player of IT infrastructure (IaaS).

Worldstream aims to uncomplicate the lives of IT leaders at tech companies. As a provider of data center, hardware, and network services, Worldstream serves various business markets, including Managed Service Providers (MSPs), System Integrators (SIs), Independent Software Vendors (ISVs), and web hosting companies. The key business objective of Worldstream is to give IT leaders peace of mind by providing high-quality infrastructure, industry-leading service, and strong partnerships that will get them excited about their IT infrastructure again.

Backed by its proprietary global backbone, Worldstream offers solid Infrastructure-as-a-Service (IaaS) solutions, which includes highly customizable bare metal servers with intelligent DDoS protection and more. Organizations that want to deploy their IT infrastructure in more than one physical location, we have several offerings available that help data center deployments across Europe. Make direct links to well-known public cloud providers with Cloud On-Ramp or cross connect to other data centers. Rather have your physical infrastructure in multiple geographic locations? Our solutions are available in multiple locations, such as Frankfurt. If you want to use Worldstream’s services at your office location, we can facilitate a direct link to your business.

Worldstream’s infrastructure as-a-service solutions include private cloud, public cloud, and several storage solutions, such as block and object storage. You can find these IaaS services here.

You might also like:

- 7 Benefits of Using Public Cloud Infrastructure.

- The difference between Bare Metal Cloud and Dedicated Servers.

- What are your options against a growing number of DDoS attacks?

Have a question for the editor of this article? You can reach us here.

Latest blogs

The 4 Elements of the Worldstream DNA

Knowledge blog

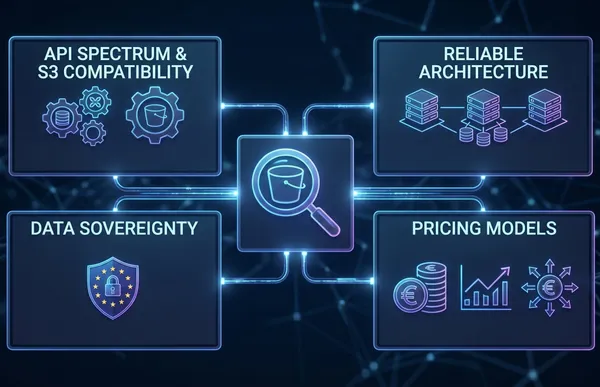

S3 Object Storage in Europe: What to Evaluate Before You Store a Single Byte

Knowledge blog

DDR5 Memory Prices Surged 307%. Here Is What That Means for Your Infrastructure Budget.

News

Pricing update. Price adjustment effective May 1, 2026

News

Worldstream and Cubbit launch independent, sovereign S3 cloud storage for Dutch enterprises

News

The Great Recalibration: Cloud Repatriation, Egress Economics, and Hybrid Architectures for 2026

Knowledge blog