Which Type of Dedicated Server Storage to Choose: NVMe, SATA SSD, or HDD?

Knowledge blog

The performance of a dedicated server may be significantly impacted by the hard disk type you choose. The decision you make regarding NVMe SSD, SATA SSD, or HDD will affect the performance and efficiency of your server, regardless of whether you’re running Virtual Private Servers (VPSs) on top of dedicated servers or managing dedicated servers themselves.

Executive Summary – A dedicated server’s performance is greatly influenced by the hard drive type selection. There has been a major change from conventional HDDs with the introduction of solid-state drives in the 1980s. SSDs using NAND-Flash memory provide faster data access, longer lifespans, and enhanced dependability. The processor performance constraint is no longer relevant thanks to this innovation. Rather, storage infrastructure has come to light as the possible limiting element.

Web hosting firms have traditionally preferred HDDs, however due to their mechanical nature, SSDs are more efficient than HDDs. It’s crucial to understand the differences between SATA and the more recent NVMe SSD, however. NVMe SSDs significantly lower latency and boost data transfer rates by using PCIe rather than SATA. These kinds of technological advances are essential for sectors like for example healthcare, gaming, and military applications that need low latency.

NVMe’s introduction has also accelerated advances in machine learning (ML) and artificial intelligence (AI) by enabling the quick analysis of enormous datasets. In tandem, RAID technology combines many hard drives in dedicated servers, achieving a trade-off between redundancy, capacity, and performance based on the setup of the server.

While HDDs are still a more affordable option for long-term data storage, there is no denying that SSDs outperform HDDs in terms of performance and dependability. But some of these restrictions may be lessened by RAID arrangements, which improves HDD performance and reliability.

It’s critical to consider all aspects like budget, performance needs, and application type when choosing the best storage option for a dedicated server. Both HDDs and SSDs as well as RAID-enabled dedicated servers have their own roles, but the choice should be made with the circumstances in mind. Recall that picking the best technological solution for a given set of requirements is just as important as picking the most cutting-edge option.

What type is the best for storage? NVMe or SATA SSDs or HDDs?

This article gathers evidence in support of choosing NVMe SSD hosting as your preferred server storage option while demystifying all three options to assist you in making an educated decision.

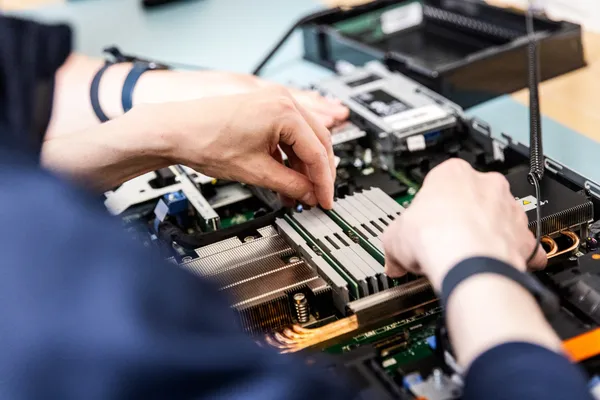

The development of server drives has taken a significant leap forward with the introduction of solid-state drives in the 1980s. They make use of NAND-Flash memory, in contrast to traditional HHDs (hard disk drives). Server drives such as these have no moving components, which is one factor that contributes to the increased reliability of SSDs. As a result, it ensures an extended period of reliable hosting service. With SSD, the rate of component deterioration is far reduced, as is the probability that data would be lost as a result of physical damage. And the speed cap is notably greater here as well. Generally speaking, an SSD module can be about 10 times quicker than an HDD module.

Processor performance is no longer per se a limiting factor for the majority of server systems. Servers can be limited by storage infrastructure though. This is illustrated by the fact that access latencies of hard drives are measured in milliseconds, whereas the performance of SSDs is measured in hundreds of microseconds. An SSD sure can represent a significant storage performance upgrade and it may breathe new life and great performance even into server systems that are a few years old. In general, SSD may provide the greatest improvement in performance of any update in a server system.

NVMe SSD vs. SATA SSD

For modern use cases, not surprisingly, SSD is increasingly being used as the primary storage infrastructure choice. It’s a no brainer that SSDs can write data much more quickly than HDDs, but how about NVMe SSD versus SATA SSD? SSDs communicate with the rest of a server system via either the SATA or NVMe protocol. Let’s provide a quick comparison between SATA SSDs and NVMe SSDs.

Non-Volatile Memory Express, or NVMe for short, is a technology that even further enhances solid state drive performance. Compared to conventional SATA SSD storage methods, it is a quicker and even more effective method of writing and retrieving data from SSDs. In short, NVMe SSD storage technology may further assist in lowering latency, increasing data transfer speeds, and enhancing performance and reliability all around, but let’s elaborate on its characteristics and benefits in more detail in this article.

Based on its architecture, NVMe SSD will always be quicker than SATA SSDs. Even though they both employ NAND memory to store the data, the speed of delivery will not be equal due to the way in which the data is transported. Although data is sent through a SATA controller, the speed at which it may be moved is slowed down by data transmission overhead.

On the other hand, since NVMe SSDs do not need a controller, the speed of data transfer is dependent on both the processors that request the data transfer and the speed of the PCIe bus. The PCIe bus is used by NVMe to increase data transmission speed. In contrast, the Advanced Host Controller Interface (AHCI) is used by SATA, providing a standard interface by which storage devices and the controller may interact. Hence, NVMe SSDs are able to provide increased bandwidth, decreased latency, while making use of the CPU’s processing capability to provide a contemporary data transfer protocol. NVMe SSDs will also continue to accelerate along with the latest PCIe generations. The NVMe technology is also very scalable as it does not rely on a controller interface but rather on the PCIe lanes.

NVMe SSD and SATA SSD vs. HDD

HDDs were the primary storage device utilized by the majority of web hosting companies in the past. There’s a simple technology underlying HDDs. Data is stored on a metal platter or disk that has a tiny magnetic coating on it. The binary codes 0 and 1 are written on the plate by a reading arm or head that moves within. To access or retrieve that data, this HDD head moves to the same location and completes the required action. The RPM (revolutions per minute) is crucial for HDD performance. An HDD with a higher RPM rate may function better. Even then, although HDD performance depends on the RPM, in other words, how quickly the HDD head spins, HDD lacks efficiency and speed compared to SATA SSD and NVMe SSD.

Next to that, HDDs require more power than SSDs due to their moving parts. This may not have a significant impact when deploying a single dedicated server, but it may matter when using multiple dedicated servers, particularly when working with dozens of servers or even more. Additionally, it is worth noting that storage components in a server are responsible for a significant portion of a server’s energy consumption, citing a percentage of server energy consumption of typically 35 percent.

So, have HDDs become totally irrelevant for dedicated server deployments compared to NVMe SSD and SATA SSD? No, you can’t put it that way. On the contrary, it depends on the use case. For some use cases, the write endurance can be sort of an issue. SSD’s NAND flash memory has a limited capacity for rewriting and is thus susceptible to bit rot if data is left on it for extended periods of time. These issues may become worse as NAND flash devices age, gain capacity, and are used more often.

Because they are often denser, HDDs in server systems may offer superior long-term data retention capacities, which help to guarantee integrity and reliability over time. HDDs are therefore widely used in data-storage applications, such as backup and archive storage applications that don’t need quick data access, also for cost reasons. HDDs can also be a good choice for very large storage applications, NAS, and RAIDs. NAS (networked attached storage) enables several users and devices connected to a local area network (LAN) to access data from a centralized storage area on the network. RAID (redundant array of independent disks) is a kind of storage system that enhances the performance, reliability, and accessibility of data storage by combining many physical disk units into a virtualized logical unit. So, HDDs in server systems are finding their niche in durability and cost-effectiveness.

No doubt about it, SSDs are more reliable and speedier than HDDs, but SSDs are more expensive than HDDs, which is the main reason they are not used for all dedicated server configurations. The higher expense is, of course, readily justified in high-performance dedicated server setups, where server performance may increase significantly when utilizing SSDs. For comprehensive applications and fast-growing websites that are serving big audiences, this significant speed difference may be quite helpful.

Quite some websites or light-weight business applications may function well though without SSDs. For example, an HTML file-based website that doesn’t use a lot of server resources won’t gain much from moving from HDDs to SSDs. Similarly, content delivery networks (CDNs) and caching may be utilized by a relatively smaller blog with up to 1,000 page views per day to control demand and stay on less expensive hard drive-based dedicated servers. SSDs sure do have advantages, although not necessarily in situations involving low-profile web hosting and low-demand servers. Under these circumstances, there isn’t much room for extravagant hardware because web hosting companies may sometimes operate on very tight margins while their clients might not per se need it for their operations.

Applications in Ecommerce, Banking, AI, ML

Back to the NVMe storage technology. As said, NVMe drives bring an interface based on PCIe rather than SATA, enabling more bandwidth down to the flash memory and allowing the capabilities of the flash memory to be more fully utilized. Latencies may now be shown to decrease even more from the microseconds that SATA SSDs provided to milliseconds with NVMe-equipped dedicated servers. IOPS and throughput capabilities to and from the flash memory also significantly increase when utilizing NVMe technology, with PCIe providing more than 25 times the data transfer capabilities compared to SATA while latencies are significantly being improved.

Support for a variety of form sizes and connectivity is also a big plus of NVMe technology, giving storage architectures the flexibility to include flash-based substitutes. By streamlining interfaces, this direct connectivity makes data writing and retrieval from SSD storage easier. PCIe and NVMe are therefore capable of reducing bottlenecks by swapping out ‘inefficient’ SATA buses. This may translate to quicker and more effective storage options for a range of uses.

Although NVMe dedicated servers have the potential to transform many industries, they are already making great strides in a few key ones. With the introduction of NVMe technology, applications in the banking and ecommerce areas are undergoing significant changes. The ability to handle data with speed translates into real-time data accessibility, which is critical for these industries. The speed of NVMe may guarantee effectiveness and customer happiness in situations where banking transactions, such as trading of foreign currency, or online shopping procedures depend on snap judgments.

NVMe technology is also highly valued for use in mission-critical applications like healthcare, or video game delivery, or for example military applications, all of which use cases may place a high premium on having a low latency. Aided by NVMe, calculations may be completed more quickly, which paves the way for improved and more timely decision-making.

In addition, there are a lot of advantages for the fields of artificial intelligence (AI) and machine learning (ML). As a result of the increased processing speeds made possible by NVMe technology, AI and ML powered applications are seeing more widespread use. Thanks to the enormous advancement in computing power aided by NVMe technology, algorithms can now analyze large datasets more quickly and effectively.

Using RAID Technology in Dedicated Server Setups

By combining the strength of multiple hard drives, RAID, or Redundant Array of Independent Disks, provides a special combination of speed, capacity, and redundancy. RAID may provide a single large storage area and significantly increase server speed, depending on how the dedicated server is configured. Upon exploring the variations of RAID, such as RAID 0, RAID 1, RAID 5/6, and RAID 10, it becomes evident that each variety may offer distinct advantages for capacity, performance, and reliability.

As said, HDDs are often used because they are reasonably affordable, fitting well with long-term data storage at limited cost and an energy-efficient approach, although their mechanical design may cause them to lag in performance and reliability. To mitigate the drawbacks and continue to make use of HDD’s benefits, RAID may be used to help reduce the risks associated with HDDs.

On the other hand, what we covered in this article as well, SSDs prioritize performance and speed. Because they don’t have any moving components, SSDs may perform significantly faster than HDDs while having a lower failure rate. Both HDDs and SSDs can be used in a RAID setup, although the details of RAID setups are different for each.

Examining Various Types of RAID

- RAID 0 (striped) – This fundamental RAID setup increases performance by combining many disks into a single volume. But since it lacks redundancy, it is fragile and is often only used for activities when speed is more important than data loss.

- RAID 1 (mirrored) – With RAID 1, two identical disks are used to mirror or replicate data evenly across the array’s drives. It provides for redundancy, allowing for continued operation even if one drive fails. RAID 1 improves read performance by allowing data to be read from any disk in the array. However, it increases write latency, requiring two drives for the usable capacity of one drive.

- RAID 5/6 (striped with distributed parity) – RAID 5 and 6 are effective solutions for maintaining data even when one or two disks get lost. They improve read speed and require a specialized hardware controller for write performance. These solutions are suitable for general-purpose systems where most transactions are reads, such as web servers and file servers. However, for high-write environments like database servers, RAID 5 or 6 may not be the best option due to potential performance degradation.

- RAID 10 (mirrored and striped) – RAID 10 is a combination of RAID 0 and RAID 1, requiring a minimum of 4 disks for increased redundancy and speed. It stores half of the striped data on two mirrored drives, ensuring no data loss in case of drive loss. Similar to RAID 1, only half of the disks’ capacity can be accessed, but better read and write speed and quick rebuild time are achieved. This is often the preferred RAID level for those who prioritize speed while also requiring redundancy.

Setting server system availability and uptime as a top priority for a dedicated server setup makes RAID a priceless tool. The use of RAID technology ensures minimum interruptions by enabling instantaneous data access even in the event of a disk failure.

The best RAID configuration is determined in large part by one’s particular requirements of the aim and use case. An array without redundancy can be the best option if cutting expenses is your top concern and you can live with occasional outages and the possibility of losing important data. This option can be beneficial if you are able to tolerate your website or business application being down for some time or losing some of your data (which of course can be taken care of via backups).

If the data is trivial and may be lost, but efficiency is truly essential (as with caching), then RAID 0 can be a fitting option. If the performance has to be improved, this might work well. However, there is a possibility that you may lose data (again, this can be taken care of via backups).

However, RAID 1 provides a great compromise between data redundancy and cost-effectiveness. Data is copied on two disks in this mirror setup. So, data security is ensured since in the event that one drive dies, the other has an identical duplicate.

Configurations with RAID 5 and 6 are especially suitable for big databases or web servers, or other cases where read-intensive processes are important. Improved read speed and a certain amount of fault tolerance are made possible by these RAID levels, which stripe data over many drives with parity. With double parity, RAID 6 offers an extra degree of data security.

The most versatile of the three is RAID 10, which is a mix of RAID 1 and 0. For redundancy, it replicates data, and for improved speed, it stripes it. This makes RAID 10 a well-liked option for anyone looking for storage solutions that combine speed and security.

RAID Systems: Hardware vs. Software

Dedicated servers can come with software RAID or hardware RAID. The primary benefit of using software RAID is that it makes use of the compute resources of the operating system in which the RAID discs are installed. There is no need for an extra hardware RAID controller, which lowers the cost. It also allows dedicated server users to change array configurations without causing a hardware RAID controller to interfere.

Software RAID’s main disadvantage is that it is usually slower. Both the read and write performance of a RAID setup and other server functions may be slowed down by it. Replacing damaged disks using software RAID is a bit trickier. Before one replaces the disk, the system must be told to stop using it.

Hardware RAID maintains the RAID configuration without reliance on the operating system via the use of controllers. Therefore, there is no loss of processing power on the disks under the management of the RAID controller. As a result, data may be read and written more quickly and with more space. One can simply take out the damaged disk and put the new one in to replace it, it’s that simple.

One disadvantage of hardware RAID is that it will require extra hardware for the controller. Cost-wise, in general, this can be more expensive than with software RAID. Worldstream is offering cost-efficient dedicated server configurations with hardware RAID included though, eliminating this drawback.

Your needs and the limitations of your dedicated server budget should guide your decision between using software RAID or hardware RAID to implement RAID. Hardware RAID often comes with a slightly higher price tag than software RAID, but it offers many advantages, including increased performance, freedom from the restrictions imposed by software RAID, and more choices for customization. If your finances are flexible enough, a hardware RAID server system is without a doubt the way to go.

Worldstream's Dedicated Servers

Founded in 2006 by childhood friends who shared a passion for gaming, Worldstream has evolved into an international IT infrastructure (IaaS) provider. Our mission is to create the ultimate digital experience together with you and our partners.

We advise, design, and provide advanced infrastructure solutions, offering peace of mind to IT leaders at tech companies. With a commitment to high-quality infrastructure, industry-leading service, and strong partnerships, we simplify IT leaders’ lives and provide round-the-clock 24/7 support.

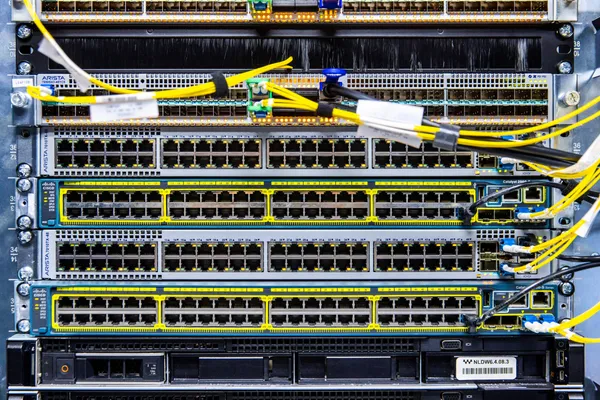

Worldstream offers dedicated servers in two varieties, also in hardware and software RAID configurations, with NVMe SSD, SATA SSD, and/or HDD: fully customizable servers and fixed instant delivery server setups. We currently have more than 15,000 dedicated servers installed for our clients in Worldstream’s data centers in the Netherlands (Naaldwijk) and Germany (Frankfurt). These dedicated servers are backed by Worldstream’s proprietary global network. The maximum bandwidth consumption on this network is only 45%, giving server users optimal scalability and DDoS defense guarantees.

You might also like:

- How to pick the right Dedicated Server Specifications.

- 8 Key Applications for Dedicated Servers in the Global Market.

- Which 6 Advantages Dedicated Servers can bring your IT Infrastructure.

Have a question for the editor of this article? You can reach us here.

Latest blogs

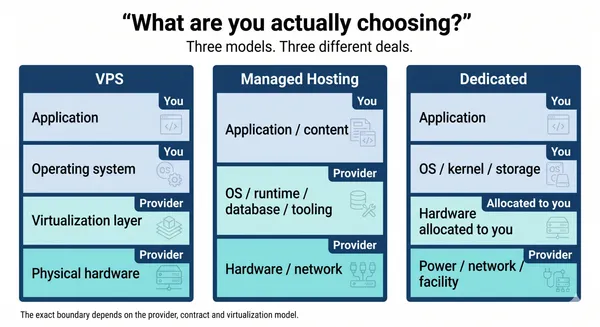

VPS, Managed Hosting, or Dedicated: What You Are Actually Choosing in 2026

Knowledge blog

The 4 Elements of the Worldstream DNA

Knowledge blog

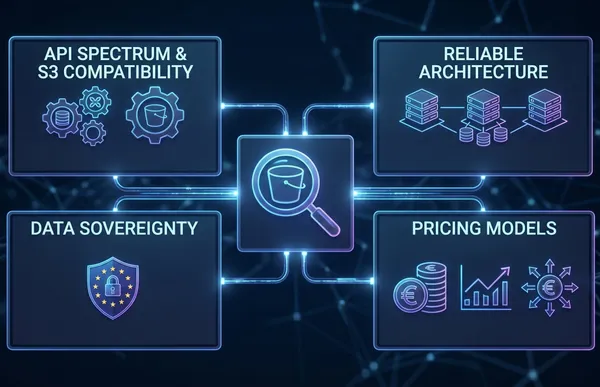

S3 Object Storage in Europe: What to Evaluate Before You Store a Single Byte

Knowledge blog

DDR5 Memory Prices Surged 307%. Here Is What That Means for Your Infrastructure Budget.

News

Pricing update. Price adjustment effective May 1, 2026

News

Worldstream and Cubbit launch independent, sovereign S3 cloud storage for Dutch enterprises

News