The Great Recalibration: Cloud Repatriation, Egress Economics, and Hybrid Architectures for 2026

For years, “Cloud First” was treated like a law of physics: migrate, standardize, move fast, and let the hyperscaler worry about the boring stuff.

That approach did deliver real wins—especially when demand is unpredictable, teams are small, and speed matters more than unit economics. But as organizations mature, a different reality appears: some workloads don’t benefit from renting elasticity forever.

That’s why cloud strategy is quietly shifting from “Cloud First” to “Workload Appropriate.” One widely cited indicator: a Barclays CIO survey (as reported publicly by DataBank) says 86% of enterprise CIOs plan to move at least some workloads back to private infrastructure or on-prem.

This isn’t “anti-cloud.” It’s the industry growing up.

TL;DR

- Cloud repatriation is becoming normal, not a weird exception.

- The biggest surprise for many teams isn’t compute—it’s data movement (egress) and network plumbing (NAT gateways).

- The “hidden tax” pattern: you may pay for Data Transfer Out and also pay per-GB NAT processing on top, depending on the traffic path.

- The most pragmatic 2026 architecture is Hybrid Edge: keep cloud-native services where they shine, but use dedicated infrastructure to neutralize bandwidth economics and regain control.

1) Why “Cloud First” is fracturing: the steady-state problem

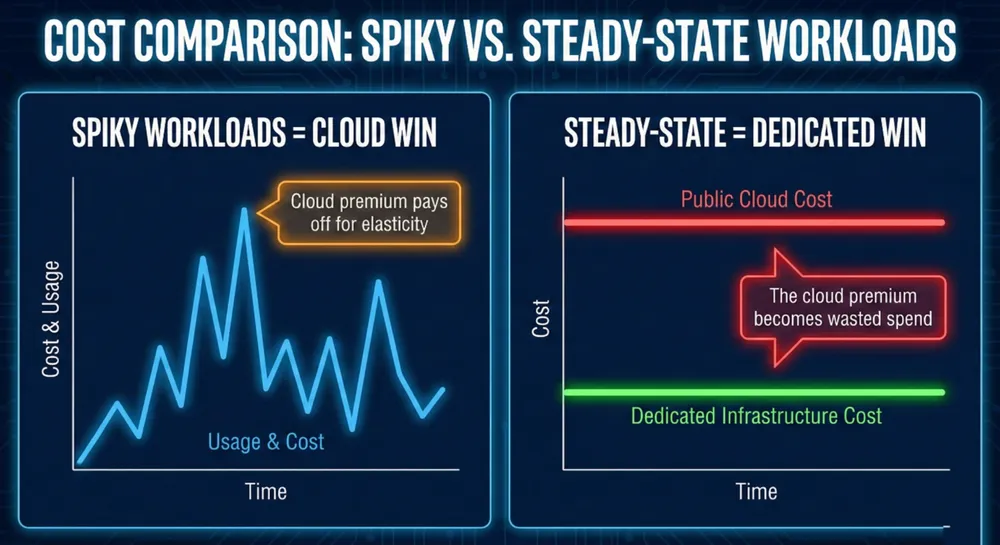

Cloud is priced like an insurance product: you pay a premium for optionality (instant scale, managed services, global reach). That premium is worth it when usage is spiky or uncertain.

But many workloads aren’t spiky. They’re steady-state:

- Core databases

- Always-on APIs

- Continuous streaming / media delivery

- High-volume downloads (patches, datasets, backups)

- AI inference services with predictable traffic

Andreessen Horowitz describes this tension as a “trillion dollar paradox”: cloud can be fantastic for velocity, but expensive at scale; they model repatriation as potentially cutting a large portion of cloud spend in mature companies.

So the new question isn’t “Should we be in the cloud?”

It’s: “Which workloads actually earn the cloud premium?”

2) The cost center most teams underprice: egress (and the plumbing around it)

Most cloud bills have three meters:

- Compute (vCPU, RAM, managed runtimes)

- Storage (GB-month, IOPS/throughput, requests)

- Data movement (egress, cross-AZ, inter-region, gateways)

Compute can often be optimized with reservations, rightsizing, autoscaling, and platform work. Data movement is harder—because it’s tied to how your application delivers value.

The “Hotel California” dynamic (in plain English)

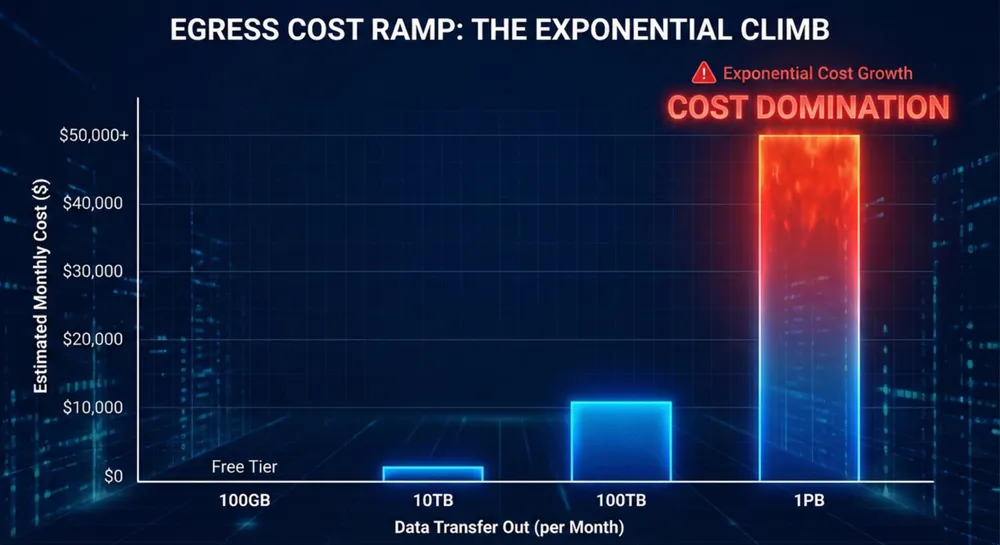

Cloud ingress is commonly free; cloud egress is not. For example, AWS includes a 100 GB/month “Data Transfer Out” allowance in many cases (with exceptions). After that, outbound traffic is tiered and varies by region and service.

Egress math that won’t get you roasted

Assumptions (state them explicitly):

- Uses decimal units: 1 TB ≈ 1,000 GB (not TiB).

- Uses illustrative list pricing. Actual pricing depends on region, service path (internet vs CDN vs private connectivity), discounts, and contracts.

What 100 TB/month can look like (illustrative list pricing)

Using a commonly cited tier pattern (example only):

- 10,000 GB × $0.09/GB = $900

- 40,000 GB × $0.085/GB = $3,400

- 50,000 GB × $0.07/GB = $3,500

Total ≈ $7,800/month (before CDNs, delivery services, regional specifics, or discount programs)

What 1 PB/month can look like (illustrative list pricing)

Same illustrative pattern:

- First 10,000 GB: $900

- Next 40,000 GB: $3,400

- Next 100,000 GB: $7,000

- Remaining 850,000 GB × $0.05/GB: $42,500

Total ≈ $53,800/month

At this point, egress can dominate the entire bill—even if compute is “optimized.”

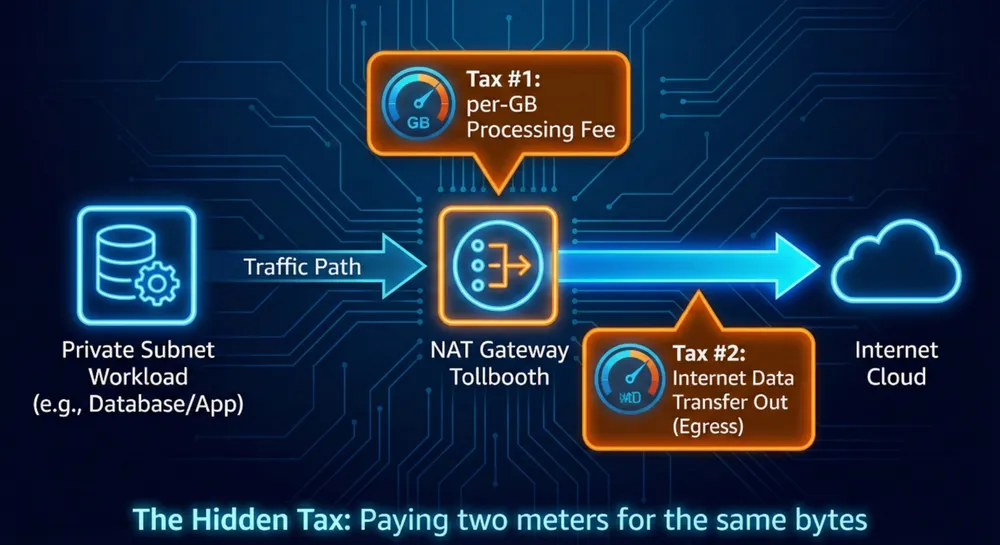

3) The hidden multiplier: NAT Gateways (the per-GB tollbooth)

In common best-practice VPC designs, workloads live in private subnets. To reach the internet (updates, package pulls, third-party APIs), they often route through a NAT Gateway.

Here’s the gotcha: NAT Gateway is usually priced with:

- an hourly charge, and

- a per-GB processing charge

That per-GB processing can be material at scale.

The “double meter” pattern (with an important nuance)

If your outbound traffic goes:

private subnet → NAT Gateway → destination

…you will pay the NAT processing fee on those bytes.

You may also pay other data transfer charges depending on where the traffic is going and how it exits the cloud network:

- If it’s going to the public internet, you can end up paying both internet Data Transfer Out and NAT processing.

- If it’s going to certain cloud services via public endpoints, you may not pay “internet egress” the same way—but you still pay the NAT processing meter (and potentially other transfer rules depending on path).

This is the “hidden tax” most people only notice after the bill arrives: bytes through NAT are metered bytes.

Repatriation shouldn’t be ideological. It should be portfolio management.

Strong candidates to repatriate (or move to dedicated infrastructure)

- Predictable baseline: consistently high utilization, 24/7

- High outbound traffic: bandwidth-heavy delivery, downloads, media, datasets

- Data gravity: large persistent datasets that make movement expensive

- Strict control requirements: auditability, tenant isolation, known physical location

- Performance density: high sustained CPU/I/O without multi-tenant variance

Better left in public cloud (at least for now)

- Spiky / event-driven demand

- Short-lived jobs (batch, CI/CD, ephemeral environments)

- Managed service leverage you’d hate to rebuild (when the service truly earns its cost)

- Global products needing many regions fast

Your goal is not “leave the cloud.”

Your goal is to stop paying the cloud premium where you don’t collect the benefits.

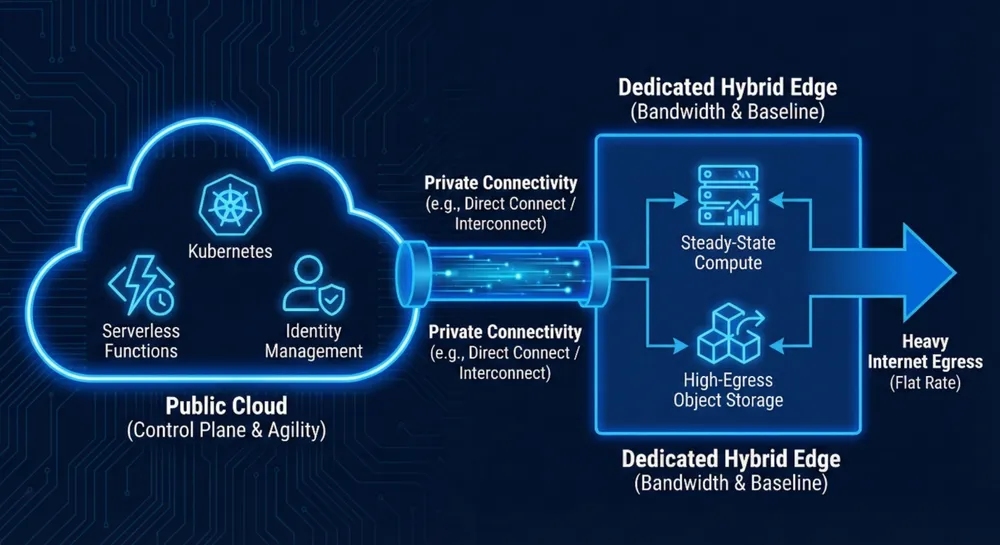

5) The Hybrid Edge: keep cloud agility, fix bandwidth economics

The most effective 2026 pattern is a hybrid design where:

- the cloud remains the control plane / innovation layer, and

- dedicated infrastructure becomes the economics layer for steady-state and bandwidth-heavy components.

(If you want a crisp definition: Hybrid Edge = hybrid cloud where a dedicated layer is used intentionally for the “heavy bytes” and baseline workloads.)

Pattern A: The Egress Gateway (changing the bandwidth economics, transparently)

Concept:

- Keep application logic in the cloud (Kubernetes, PaaS, serverless).

- Move heavy outbound delivery to a dedicated environment with high-capacity transit.

- Connect cloud ↔ dedicated using private connectivity (e.g., AWS Direct Connect).

Why it can work:

- Direct Connect has its own pricing components (port-hours + data transfer). Cloud providers publish these rate cards.

- The savings usually come from changing the cost surface: if your dedicated provider offers flatter bandwidth economics (bundled transit, high port speeds), you reduce the “punitive slope” of internet DTO list pricing.

Important reality check (make this unmissable):

- Direct Connect delivers traffic to your Direct Connect location—it does not magically become “free internet.”

- Providers still charge DTO over private connectivity depending on region and location. The point isn’t “free,” it’s “different path + different rate card + different downstream bandwidth model.”

Pattern B: The “Object Storage Offload” (stop paying egress for the heaviest bytes)

Concept:

- Keep compute and orchestration in cloud.

- Serve large assets (videos, large exports, backups, model artifacts) from an object store in dedicated infrastructure (e.g., S3-compatible storage like MinIO/Ceph).

- Your app issues signed URLs to the dedicated object store.

Why it works:

- You remove cloud egress for the largest payloads and keep cloud for what it’s good at: orchestration, identity, automation, managed control planes.

Pattern C: Baseline on dedicated, burst to cloud

Concept:

- Baseline workloads run on dedicated (predictable cost).

- Burst capacity spills into cloud when needed (pay premium only when you use elasticity).

This avoids the classic mistake: paying for “elasticity insurance” 24/7.

6) Regulation and sovereignty: why “control” is moving up the stack

Economics is the loudest driver—but governance is the quiet accelerant.

DORA made resilience and third-party oversight non-negotiable (for finance)

DORA applies from 17 January 2025. It’s not a “move off cloud” mandate, but it does force financial entities to take operational resilience and third-party risk seriously.

EU regulators have also designated major tech firms as critical third-party providers under DORA, including AWS, Google Cloud, and Microsoft (as reported publicly in late 2025).

What this means in practice: your infrastructure strategy needs credible answers to:

- exit planning,

- dependency concentration,

- incident resilience,

- and provable operational control.

Jurisdiction risk isn’t paranoia; it’s legal structure

The U.S. CLOUD Act framework can require certain providers to disclose data within their “possession, custody, or control,” even if stored outside the U.S., depending on the situation.

For EU organizations, this is a governance input—not a headline. Practical takeaway: provider ownership, legal jurisdiction, and operating model matter when you’re designing for sovereignty.

(This is not legal advice—talk to counsel for your specific risk model.)

7) Compliance isn’t “more compliant on bare metal” — it’s “more controllable”

It’s tempting to claim dedicated infrastructure is automatically more compliant. That’s wrong.

Example: HIPAA. U.S. HHS guidance makes clear that using a cloud provider requires the right contractual and operational safeguards (including a BAA where required), and the organization still carries compliance obligations.

The honest claim is:

- dedicated environments can make certain controls easier to prove (isolation, access boundaries, known location),

- but compliance still depends on contracts, processes, and safeguards.

That honesty increases trust.

8) A 30-day action plan for IT leaders

If you want a real “workload appropriate” program (not a vibes-based migration), do this:

1) Break down your cloud bill by the three meters

- compute, storage, data movement

- identify which meter grows fastest with usage

2) Identify your “Egress Cliff”

- where outbound costs become material (often 20%+ of bill for data-heavy apps)

- model 50 TB, 100 TB, 250 TB, 1 PB scenarios using your region’s published tiers and any discount programs you qualify for

3) Audit NAT Gateway exposure

- find where private subnets route heavy bytes through NAT

- price it using your provider’s model (hourly + per-GB processing)

4) Pick one workload for a Hybrid Edge pilot

Choose the one with the cleanest “heavy bytes” boundary:

- downloads

- exports

- media delivery

- backups / artifacts

5) Design for reversibility

If you can’t roll back or swap providers, you’re not doing “smart hybrid”—you’re just creating a new lock-in.

Where Worldstream fits in the Hybrid Edge (without the marketing fog)

Hybrid Edge only works if the dedicated layer is:

- predictable, single-tenant, and automatable

- well-connected (low-latency paths, private cross-connect options)

- capable of handling high outbound traffic economically

Worldstream’s role in this architecture is straightforward: dedicated infrastructure in the Netherlands as the steady-state and bandwidth layer, integrated with your cloud layer via private connectivity and modern tooling—so you keep cloud agility while taking control of the meters that punish you at scale.

(And to be explicit: the savings depend on your traffic patterns, region pricing, and connectivity design. The goal is to model it honestly before you move anything.)

Closing: the era of “Cloud Smart”

The cloud era isn’t ending. The dogma is.

In 2026, the winning infrastructure strategy is not “all cloud” or “no cloud.” It’s cloud where it earns its premium, and dedicated where predictability, bandwidth, and control matter more.

The organizations that win won’t be the ones that rent the most infrastructure.

They’ll be the ones that know what to rent, what to lease (or own), and what to connect.

Frequently Asked Questions (FAQ)

Cloud repatriation is moving some workloads out of a public cloud (like AWS, Azure, or Google Cloud) back to infrastructure you control, such as dedicated servers, private cloud, or on-prem. It’s usually done to reduce steady-state costs, improve predictable performance, or meet data control / compliance requirements. It’s typically a selective move (some workloads), not a full exit.